BSL Game Controller

Roles: Solo Developer

Tools Used: Unreal Engine 5, Media pipe, Jira, Figma

Release Date: TBC

Development Time: 2 Months

Available at: Github

This project was developed as my final thesis piece during my Masters Degree at Falmouth University.

This project explores how British Sign Language (BSL) can be repurposed as an alternative real-time game input method using a standard webcam, combining my interests in game accessibility, alternative controllers, and human–computer interaction. I designed and developed a developer focused Unreal Engine 5 plugin built on MediaPipe’s framework, enabling designers and programmers to seamlessly integrate custom gesture recognition into their game projects.

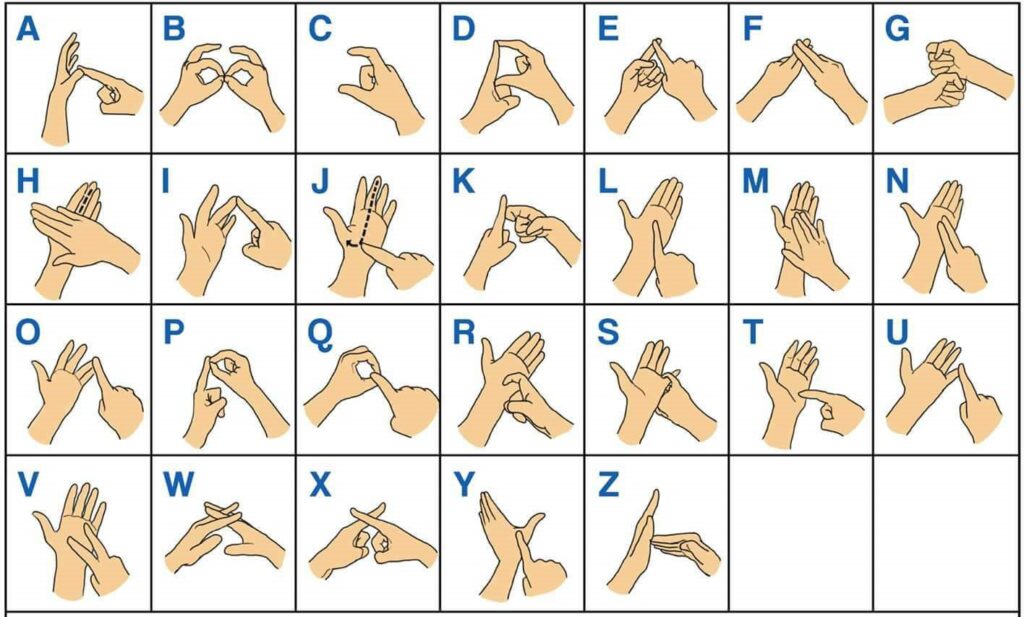

The demo supports recognition of the BSL alphabet, simple directional gestures, and intuitive control signals such as thumbs up/down and grab, demonstrating how gesture-based input can function alongside or in place of traditional control schemes. A strong focus was placed on low-latency input, scalability, and designer-friendly tools for ease of use.

Some of the project features include:

> Custom Gesture System Design

Designed and implemented a flexible gesture recognition system supporting both one-handed and two-handed signs. Defined a reusable gesture set structure, enabling rapid creation and iteration of new gestures without changes to core recognition logic.

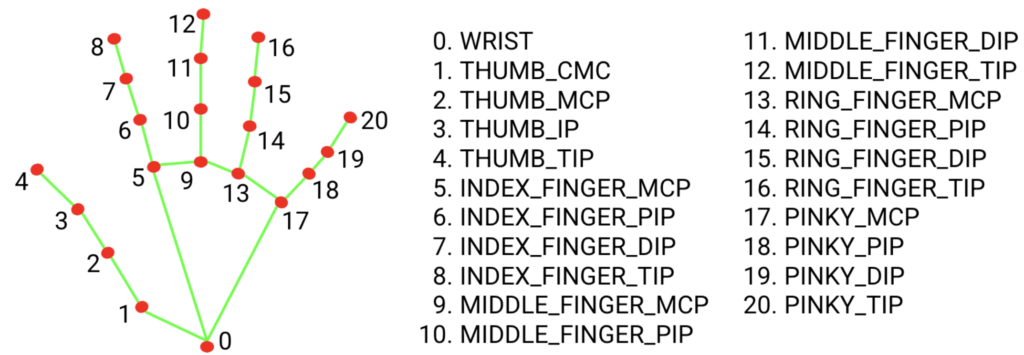

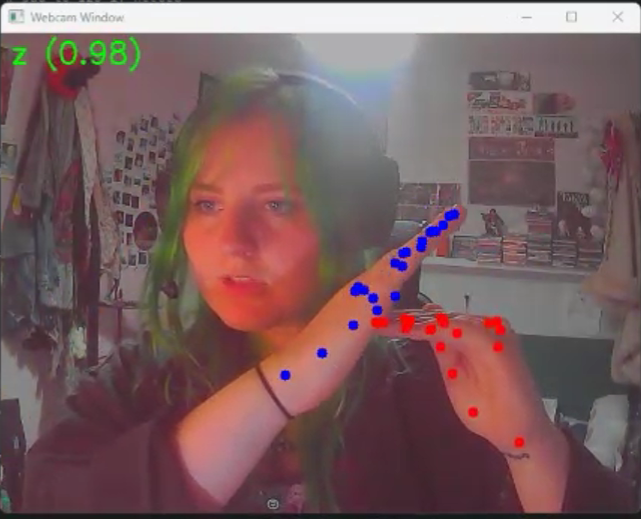

> 3D Hand Landmark Processing

Integrated accurate 21-point three-dimensional hand landmark recognition to drive gesture detection. Ensured landmark data remained stable and usable within real-time gameplay and UI interaction contexts.

> Machine Learning Model Training & Integration

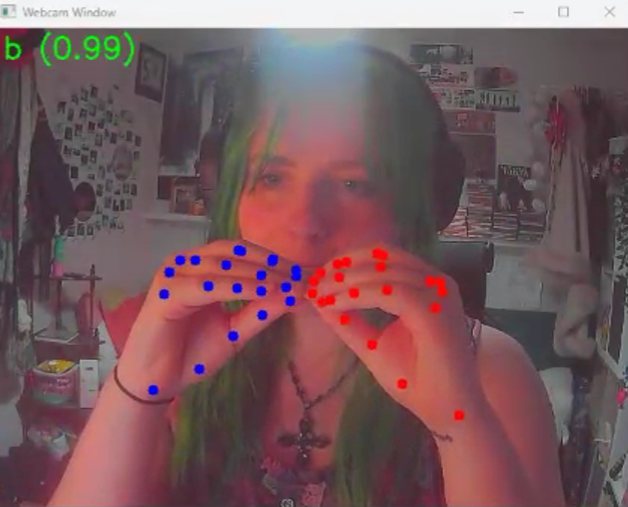

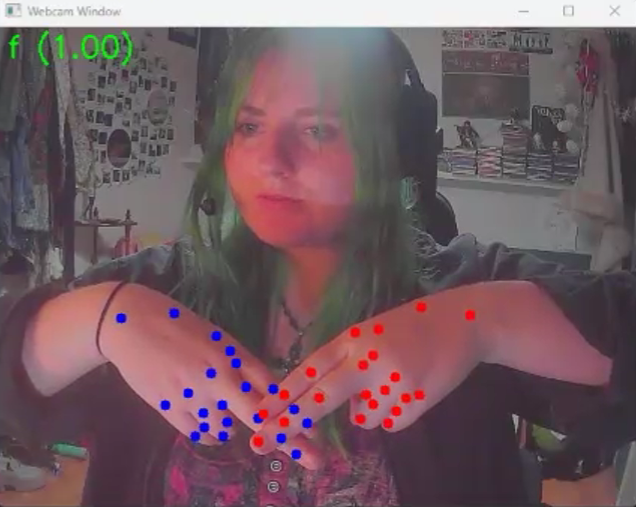

Trained a custom machine learning model using TensorFlow Lite to recognise complex hand gestures, including two-handed BSL signs. Optimised the model for real-time inference, balancing recognition accuracy with performance constraints.

>Real-Time Data Pipeline (MediaPipe -> Unreal Engine)

Built a real-time communication pipeline using UDP sockets to transmit hand tracking data from MediaPipe, using Python, into Unreal Engine 5, with C++. Designed the system to be lightweight, low-latency, and robust against packet loss during live webcam input.

> Unreal Engine Plugin Development

Developed a custom Unreal Engine plugin featuring a background UDP listener to receive and process incoming webcam and gesture data. Structured the plugin to be modular, adaptable, and efficient to run alongside gameplay systems.

>Designer Friendly Blueprint Integration

Created custom Blueprint nodes exposing gesture input in a format consistent with Unreal’s enhanced input system. Enabled designers to bind gestures to gameplay and UI actions without requiring technical or machine-learning knowledge.

MORE DETAILS COMING SOON…